Abstract

This systematic literature review aims to synthesize empirical evidence on the patterns, prevalence, and rationalizations of generative artificial intelligence (GenAI) use among university students, with a specific focus on academic dishonesty and ethical boundary negotiations in higher education contexts. Following the PRISMA framework, a comprehensive search was conducted across Scopus, Web of Science, Google Scholar, SpringerLink, ScienceDirect, IEEE Xplore, and ACM Digital Library for peer-reviewed studies published between 2023 and 2025. Inclusion criteria encompassed empirical studies examining GenAI use by university students in academic contexts. After duplicate removal and screening, 23 studies meeting the criteria were included for narrative synthesis and thematic analysis. The review indicates high prevalence rates of GenAI use (50-80% across studies), primarily for academic writing support, concept understanding, literature summarization, and idea generation. Students actively construct ethical boundaries, rationalizing AI use for brainstorming and editing as acceptable while viewing complete assignment generation as dishonest. Rationalizations are influenced by productivity needs, academic pressures, social norms, and unclear institutional policies. Moral disengagement and situational ethics emerge as common justifications for boundary-crossing behaviors. AI-mediated academic dishonesty represents a complex socio-technical phenomenon involving continuous moral negotiation rather than simple cheating. Institutions must develop clear AI policies, integrate AI literacy into curricula, and redesign assessments to promote ethical engagement. Future research should employ longitudinal and cross-cultural designs to better understand the evolving dynamics of student-AI interactions in academic settings.

Keywords: Academic Dishonesty, Ethical Rationalization, Generative Artificial Intelligence, Higher Education, Student Behavior.

Introduction

The rapid advancement of generative artificial intelligence (GenAI) has fundamentally transformed academic practices in higher education [1], [2]. Tools such as ChatGPT, Claude, and Copilot enable students to efficiently generate text, summarize literature, solve problems, and develop academic ideas, marking a significant shift in how knowledge is accessed, processed, and produced [3], [4], [5]. In learning contexts, GenAI is increasingly positioned as a cognitive tool that supports self-regulated learning, reflection, and metacognition, helping students enhance productivity, reduce cognitive load, and overcome academic difficulties [6], [7], [8].

However, the widespread adoption of these technologies also raises substantial concerns regarding academic integrity, originality of work, and the potential erosion of critical thinking skills [9], [10]. The ethical boundary between using AI as a learning aid versus as a substitute for human thinking remains contested [11], [12], creating ambiguity in academic settings. Students are not passive recipients of this technological shift but actively negotiate these boundaries through personal rationalizations, often limiting AI use to brainstorming, editing, or concept understanding while avoiding complete assignment generation [3], [4], [6]. These rationalizations are influenced by personal values, academic pressures, social norms, and perceptions of institutional policies [13], [14], [15].

Despite growing scholarly attention, current evidence remains fragmented, with studies often focusing either on usage patterns and student attitudes [1], [16], [17] or ethical and integrity aspects [4], [11], [18] in isolation. There is limited systematic synthesis integrating usage patterns, prevalence, and student rationalizations within a comprehensive analytical framework. This fragmentation hinders a holistic understanding of how GenAI shapes student learning and academic integrity. Furthermore, variations across cultural, disciplinary, and institutional contexts have not been systematically mapped [19], [20].

Given these gaps, a systematic synthesis of empirical findings is needed to develop a coherent understanding of how university students engage with GenAI, how they justify such use, and where they draw ethical boundaries. This study therefore aims to systematically review empirical evidence on patterns of AI-mediated academic dishonesty and the ethical rationalizations employed by university students.

Methodology

This systematic literature review began with formulating research questions using the SPIDER framework (Sample, Phenomenon of Interest, Design, Evaluation, Research Type) to ensure systematic inquiry. The research questions guiding this review were: What are the primary patterns and prevalence of generative AI use among university students for academic tasks? and How do students rationalize and justify their use (or non-use) of AI tools within academic integrity boundaries?

Following question formulation, search keywords were developed based on core concepts from the research questions. The key terms included: “generative AI”, “ChatGPT”, “university students”, “higher education”, “academic use”, “AI-assisted writing”, and “academic integrity”. These terms were combined using Boolean operators (AND, OR) and entered into selected databases. The search was conducted using Scopus as the primary database, supplemented by Web of Science, Google Scholar, SpringerLink, ScienceDirect, IEEE Xplore, and ACM Digital Library to ensure comprehensive coverage.

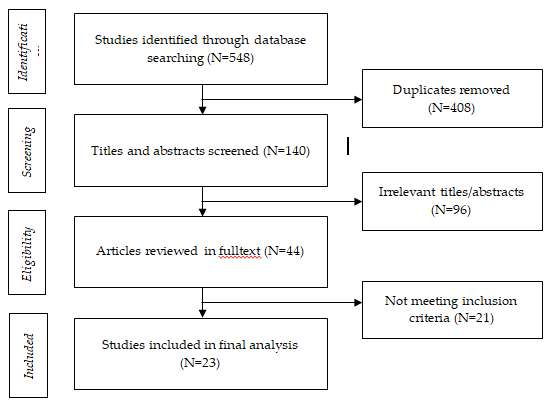

After collecting articles, duplicate removal was performed using the Mendeley and Rayyan applications. The author then screened titles and abstracts, followed by full-text assessment with the help of a second reviewer to ensure reliability. From an initial identification of 548 records, 23 articles met the inclusion criteria and were selected for final synthesis. The journal selection flow is presented in a PRISMA flow diagram (Figure 1).

The inclusion criteria for journal selection were: 1) journal content discussing generative AI use by university students in academic contexts, 2) publication year between 2023–2025, 3) English language journals, 4) empirical research using quantitative, qualitative, or mixed-methods designs. Exclusion criteria consisted of: 1) studies not focused on higher education students, 2) non-English publications, 3) books, meta-analyses, editorials, and research with unclear methodologies, 4) preprints or non-peer-reviewed works, and 5) articles lacking empirical data relevant to the research questions.

As a review of published literature, no ethical approval was required for this study; however, all included articles were checked for reported ethical compliance in their original publications. Data extraction and synthesis followed narrative and thematic approaches to address the research questions systematically.

Result

Following the complete literature review process outlined in the PRISMA diagram in the methodology section, the researcher obtained 23 articles that met the inclusion criteria. These articles discussed patterns, prevalence, and rationalizations of generative artificial intelligence (GenAI) use by students in academic contexts.

| No | Study (Year, Country) | Sample & Context | Prevalence of AI Use | Primary Use Cases | Key Rationalizations | Ethical Positioning |

| 1 | Sousa & Cardoso (2025, Portugal) | n=132, Higher education students | 97.70% | Concept clarification, Idea generation | Time-saving, Ease of use | Ethical gray area |

| 2 | Deng et al. (2025, USA) | n=363, Multi-discipline | 86% | Problem-solving, Coding, Academic writing | Learning enhancement | Legitimate, concern over reliance |

| 3 | Archana et al. (2025, India) | n=421, Multi-discipline | >90% | Learning support, Academic writing, Plagiarism check | Trust & ethical concerns | Legitimate with caution |

| 4 | Jamshaid (2025, Pakistan) | n=600, Multi-discipline | 69.80% | Concept understanding, Drafting | Instant tutor | Legitimate with risk awareness |

| 5 | Tragel et al. (2025, Estonia) | n=282, Multi-discipline | 44–52% | Summarization, Revision | Skill development concerns | Mixed perceptions |

| 6 | Johnston et al. (2025, UK) | n=30, Multi-discipline | 70% | Definitions, Planning | Understanding aid | Gray area |

| 7 | Ananin et al. (2025, Russia) | n=450, Multi-discipline | High (no specific %) | Academic writing, Lab work | Responsibility shift | Needs regulation |

| 8 | Lund et al. (2025, USA) | n=401, Multi-discipline | N/A (qualitative focus) | Full writing, Revision | Ethics-based judgment | Variable depending on context |

| 9 | Al Zaidy (2024, Global) | n=3839, Multi-discipline | 86% | Information search, Grammar checking, Summarization | Trust & fairness issues | Needs guidance |

| 10 | Almassaad et al. (2024, Saudi Arabia) | n=859, Higher education | 78.70% | Concept clarification, Translation | Ease, Feedback seeking | Needs guidelines |

| 11 | Arowosegbe et al. (2024, UK) | n=136, Multi-discipline | 52% | Grammar checking, Idea generation | Academic advantage | Plagiarism/privacy concerns |

| 12 | Corbin et al. (2024, Australia) | n=101, Philosophy students | 54.50% | Summarizing readings | Time pressure, Difficulty | Legitimate |

| 13 | Deschenes & McMahon (2024, USA) | n=360, Multi-discipline | ~65% | Summarization, Editing | Fear of plagiarism | Problematic |

| 14 | Holechek & Sreenivas (2024, USA) | n=361, Multi-discipline | 60.75% | Academic writing, Research ideation | Productivity, Creativity | Legitimate if responsible |

| 15 | Johnston et al. (2024, UK) | n=2555, Multi-discipline | >50% | Grammar checking, Concept support | Minor help acceptable | Partial legitimacy |

| 16 | Huang et al. (2024, USA) | n=25, Methods course | <50% | MCQ assistance, Verification | Convenience, Accuracy | Legitimate selectively |

| 17 | Stone (2024, USA) | n=733, Psychology | Mixed (41–63%) | Class tasks, Exploration | Career relevance | Morally mixed |

| 18 | Razmerita (2024, Northern Europe) | n=215, Business students | ~61% | Editing, Theory explanation | Productivity | Ethical with conditions |

| 19 | Barrett & Pack (2023, USA) | n=226, Multi-discipline | N/A (qualitative) | Brainstorming, Outlining | Cognitive offloading | Writing assistance = unethical |

| 20 | Črček & Patekar (2023, Croatia) | n=201, Multi-discipline | 55.20% | Idea generation, Paraphrasing | Expression support | Idea use acceptable |

| 21 | Lyons et al. (2023, USA) | n=371, Computing | Majority | Prompt understanding, Paraphrasing | Preference for own work | Legitimate if properly trained |

| 22 | Smolansky et al. (2023, Australia/USA) | n=425, Multi-discipline | 24–29% | Essays, Code, MCQ | Creativity concern | Conditional acceptance |

| 23 | Black & Tomlinson (2025, USA) | n=39, General education | ~14% | Editing, Evidence search | Improve communication | Emphasize independence |

The 23 selected studies were published between 2023 and 2025. Methodologically, most studies used quantitative survey-based methods [1], [14], [16], some employed qualitative approaches [10], and others applied mixed-methods designs [5], [7]. Research locations spanned various countries, including the United States, United Kingdom, India, Australia, Portugal, Saudi Arabia, Pakistan, Russia, Estonia, Croatia, as well as several studies with global scope. The United States was the most frequent research location [1], [2], [4], [12], followed by the United Kingdom [11], [21] and South Asian and Middle Eastern countries [7], [14], [19].

All studies examined higher education students from various disciplines, both in multidisciplinary contexts and specialized fields such as computing [20], philosophy [18], business [5], and psychology [12]. Sample sizes showed considerable variation, ranging from 25 participants [22] to over 3,800 respondents [16], reflecting differences in research scale and context.

Synthesis results indicate that GenAI use among students is generally high across most studies. Several studies reported usage prevalence above 80% [1], [14], [16], [19] while others showed moderate usage rates between 50% and 70% [2], [11], [21]. Conversely, some studies indicated relatively low usage rates, particularly in specific learning contexts, such as in Black and Tomlinson [10] and Huang et al. [22], which revealed that not all students actively utilize GenAI in academic activities.

Regarding usage types, most studies demonstrated that GenAI is primarily used to support academic writing, including editing, paraphrasing, and outlining [4], [6], [8]. Additionally, students use AI to understand course concepts and materials [7], [14], summarize readings and literature [17], [18], generate ideas and conduct brainstorming [2], [3], and support problem-solving and programming [1], [9]. These findings indicate that GenAI is generally positioned as a cognitive tool to enhance learning efficiency and reduce academic burden.

Beyond usage patterns, synthesis results also reveal diverse forms of student rationalization in utilizing GenAI. Most students view AI as an instrumental tool to improve learning effectiveness and productivity Deng et al [1], Razmerita [5], Jamshaid [7] describes AI as an "instant tutor" that helps overcome conceptual difficulties, while Holechek and Sreenivas [2] and Sousa and Cardoso [23] emphasize AI's role in enhancing creativity and time efficiency.

Simultaneously, many students establish moral boundaries in GenAI use. Johnston et al. [11] and Črček and Patekar [6] demonstrate that using AI for editing and brainstorming is considered acceptable, while using it to generate complete assignments is deemed unethical. Barrett and Pack [3] assert that this boundary reflects students' efforts to maintain intellectual autonomy and academic integrity.

Several studies also indicate ethical ambiguity in AI use. Stone [12] found that some students use AI in prohibited or ambiguous contexts due to social pressure and the perception that such practices have become normative. Arowosegbe et al. [13] reported that concerns about plagiarism and privacy often coexist with perceptions of academic benefits, while Smolansky et al. [9] showed that students continue using AI despite awareness of its potential impact on creativity.

Individual ethical attitudes also play a crucial role in determining AI use. Lund et al. [4] demonstrated that students' moral beliefs are a primary factor in deciding whether AI is used as a learning aid or viewed as a form of cheating. Black and Tomlinson [10] found that students emphasizing intellectual independence tend to be more skeptical of intensive AI use.

Furthermore, synthesis results indicate that GenAI use is influenced by a combination of internal and external factors. Internal factors include needs for efficiency and productivity [2], levels of academic self-confidence [10], personal moral beliefs [4], and attitudes toward technology [1]. External factors encompass academic pressure and workload [7], social norms in campus environments [12], unclear institutional policies [13], and technology access [14].

Overall, this systematic review's findings demonstrate that GenAI use has become a relatively common practice in higher education. Students primarily utilize AI to support writing, concept understanding, and learning efficiency. This usage is rationalized through considerations of productivity, moral boundaries, social pressure, and personal ethical beliefs. These findings suggest that AI use cannot be understood merely as a form of academic dishonesty, but rather as a process of moral and strategic negotiation in responding to academic demands and technological developments.

Discussion

The findings of this systematic literature review demonstrate that generative artificial intelligence (GenAI) use among students has become a widespread and relatively integrated practice in academic activities. The high prevalence of use reported across diverse geographical and disciplinary contexts indicates that GenAI is no longer viewed as experimental technology but as part of the contemporary digital learning ecosystem [1], [16], [19]. This aligns with broader trends emphasizing accelerated AI-based technology adoption in post-pandemic higher education [4], [5].

The dominance of GenAI use for supporting writing, concept understanding, and literature summarization reveals that students primarily utilize AI as a cognitive and metacognitive aid. In this context, AI functions as cognitive offloading that helps students manage academic task complexity, reduce mental burden, and enhance learning efficiency. This supports perspectives positioning AI as a "co-learning agent" in the learning process [7]. However, dependence on AI as a cognitive mediator raises concerns about potential long-term declines in independent and reflective thinking skills, as highlighted by Smolansky et al. [9].

Beyond being a learning tool, GenAI also becomes an object of moral negotiation in students' academic practices. The synthesis shows that students actively construct ethical boundaries in AI use, particularly by distinguishing between uses considered "supportive" versus those that "replace" thinking processes. This pattern aligns with findings that students tend to accept AI for editing and brainstorming but reject its use for complete assignment generation [6], [11]. This phenomenon reflects students' efforts to maintain academic identity and intellectual autonomy amid technological penetration.

However, these moral boundaries are not always stable or consistent. Several studies indicate ethical ambiguity influenced by social pressure, group norms, and unclear institutional policies [12], [13]. In such situations, students often adopt defensive rationalizations, such as viewing AI use as common practice or as a response to excessive academic demands. This pattern suggests that academic dishonesty in the AI context does not always originate from manipulative intent but often results from complex interactions between structural pressures and normative uncertainty.

Findings regarding the role of individual moral beliefs reinforce the perspective that AI use cannot be understood homogeneously. Lund et al. [4] and Black and Tomlinson [10] show that students with strong ethical orientations and commitment to intellectual independence tend to be more selective in utilizing AI. This indicates that psychological factors and personal values play roles equally important to external regulation in shaping student academic behavior.

Furthermore, analysis of internal and external factors reveals that GenAI use results from multidimensional dynamics. Needs for efficiency, academic self-confidence, and attitudes toward technology interact with task pressure, social norms, and institutional policies. The absence of clear guidelines often forces students to develop ethical standards independently, ultimately resulting in varied practices and interpretations of academic dishonesty boundaries [7], [12].

Within a broader framework, these findings support the view that academic integrity in the AI era cannot be reduced to a simple dichotomy between "honest" and "cheating." Instead, GenAI use practices reflect ongoing negotiation processes between traditional educational values, performative demands of academic systems, and opportunities offered by technology. Thus, academic dishonesty in the AI context needs to be understood as a socio-technical phenomenon rooted in institutional structures, academic culture, and student subjectivity.

The theoretical implication of these findings is the need to develop new conceptual frameworks capable of explaining relationships between AI technology, academic morality, and learner identity formation. Approaches focusing solely on plagiarism detection or disciplinary sanctions potentially overlook the reflective and pedagogical dimensions of AI use. Conversely, approaches integrating AI literacy, ethics education, and adaptive learning design may offer more sustainable strategies.

Thus, this discussion affirms that student GenAI use is not merely a technical or administrative issue but an epistemological and ethical challenge for higher education. How institutions respond to this phenomenon will significantly determine whether AI develops as an emancipatory tool in learning or instead deepens academic dishonesty problems in the future.

Conclusion

This systematic literature review aimed to synthesize empirical evidence on generative artificial intelligence (GenAI) use among university students in academic contexts. Analysis of 23 studies published between 2023–2025 confirms that GenAI has become integral to higher education learning practices, with students widely utilizing these tools to support academic writing, concept understanding, literature summarization, and problem-solving. The review reveals that GenAI use cannot be understood simply as academic dishonesty. Instead, students actively construct moral rationalizations and boundaries, distinguishing between uses that support learning processes versus those that replace intellectual effort. These rationalizations are shaped by internal factors such as moral beliefs and academic orientation, and external factors including task pressure, social norms, and institutional policies.

These findings affirm that AI use in academic environments results from complex ethical and strategic negotiation processes. Academic dishonesty in the GenAI context often emerges not merely from intent to cheat, but as a response to regulatory ambiguity, academic performance demands, and evolving digital learning ecosystems. Thus, this study concludes that addressing AI-mediated academic dishonesty requires moving beyond surveillance and punitive approaches toward more holistic strategies that recognize the nuanced moral reasoning and contextual factors influencing student behavior in technology-rich learning environments.

References

- N. Deng, E. J. Liu, and X. Zhai, “Understanding University Students’ Use of Generative AI: The Roles of Demographics and Personality Traits,” May 19, 2025, arXiv: arXiv:2505.02863. doi: . DOI: 10.48550/arXiv.2505.02863

- S. Holechek and V. Sreenivas, “Abstract 1557 Generative AI in Undergraduate Academia: Enhancing Learning Experiences and Navigating Ethical Terrains,” Journal of Biological Chemistry, vol. 300, p. 105921, Mar. 2024, doi: . DOI: 10.1016/j.jbc.2024.105921

- A. Barrett and A. Pack, “Not quite eye to A.I.: student and teacher perspectives on the use of generative artificial intelligence in the writing process,” Int J Educ Technol High Educ, vol. 20, no. 1, p. 59, Nov. 2023, doi: . DOI: 10.1186/s41239-023-00427-0

- B. Lund et al., “Student Perceptions of AI-Assisted Writing and Academic Integrity: Ethical Concerns, Academic Misconduct, and Use of Generative AI in Higher Education,” AI in Education, vol. 1, no. 1, Sep. 2025, doi: . DOI: 10.3390/aieduc1010002

- L. Razmerita, “Human-AI Collaboration: A Student-Centered Perspective of Generative AI Use in Higher Education,” ECEL, vol. 23, no. 1, pp. 320–329, Oct. 2024, doi: . DOI: 10.34190/ecel.23.1.3008

- N. Črček and J. Patekar, “Writing with AI: University Students’ Use of ChatGPT,” Journal of Language and Education, vol. 9, no. 4, pp. 128–138, Dec. 2023, doi: . DOI: 10.17323/jle.2023.17379

- S. Jamshaid, “AI and study habits among Generation Z: A mixed-methods case study of undergraduate learners at the University of Gujrat,” Research Journal for Social Affairs, vol. 7, no. 1, 2025.

- A. Deschenes and M. McMahon, “A Survey on Student Use of Generative AI Chatbots for Academic Research,” Evidence Based Library and Information Practice, vol. 19, no. 2, pp. 2–22, Jun. 2024, doi: . DOI: 10.18438/eblip30512

- A. Smolansky, A. Cram, C. Raduescu, S. Zeivots, E. Huber, and R. Kizilcec, “Educator and Student Perspectives on the Impact of Generative AI on Assessments in Higher Education,” Jul. 2023, pp. 378–382. doi: . DOI: 10.1145/3573051.3596191

- R. W. Black and B. Tomlinson, “University students describe how they adopt AI for writing and research in a general education course,” Sci Rep, vol. 15, no. 1, p. 8799, Mar. 2025, doi: . DOI: 10.1038/s41598-025-92937-2

- H. Johnston, R. F. Wells, E. M. Shanks, T. Boey, and B. N. Parsons, “Student perspectives on the use of generative artificial intelligence technologies in higher education,” Int J Educ Integr, vol. 20, no. 1, p. 2, Feb. 2024, doi: . DOI: 10.1007/s40979-024-00149-4

- B. Stone, “Generative AI in Higher Education: Uncertain Students, Ambiguous Use Cases, and Mercenary Perspectives,” Teaching of Psychology, vol. 52, Dec. 2024, doi: . DOI: 10.1177/00986283241305398

- A. Arowosegbe, J. S. Alqahtani, and T. Oyelade, “Perception of generative AI use in UK higher education,” Front. Educ., vol. 9, Oct. 2024, doi: . DOI: 10.3389/feduc.2024.1463208

- A. Almassaad, H. Alajlan, and R. Alebaikan, “Student Perceptions of Generative Artificial Intelligence: Investigating Utilization, Benefits, and Challenges in Higher Education,” Systems, vol. 12, no. 10, Sep. 2024, doi: . DOI: 10.3390/systems12100385

- D. P. Ananin, R. V. Komarov, and I. M. Remorenko, “‘When Honesty is Good, for Imitation is Bad’: Strategies for Using Generative Artificial Intelligence in Russian Higher Education Institutions,” Vysš. obraz. Ross. (Print), vol. 34, no. 2, pp. 31–50, Feb. 2025, doi: . DOI: 10.31992/0869-3617-2025-34-2-31-50

- A. A. Zaidy, “The Impact of Generative AI on Student Engagement and Ethics in Higher Education,” Journal of Information Technology, Cybersecurity, and Artificial Intelligence, vol. 1, no. 1, pp. 30–38, Nov. 2024, doi: . DOI: 10.70715/jitcai.2024.v1.i1.004

- I. Tragel, L.-M. Komissarov, E. Miilman, N. K. Teiva, and M.-M. Tammepõld, “A Year of Generative AI: Observations from a Survey among University Students in Estonia,” Journal of Academic Writing, vol. 15, no. S2, pp. 1–10, Apr. 2025, doi: . DOI: 10.18552/joaw.v15iS2.1118

- T. Corbin et al., “Reading at university in the time of GenAI,” Learning Letters, vol. 3, pp. 35–35, Dec. 2024, doi: . DOI: 10.59453/ll.v3.35

- S. N. Archana, V. R. Renjith, P. K. Padmakumar, S. C., and N. Aboobaker, “AI assisted learning and research: an exploratory study among university students and scholars,” Discov Educ, vol. 4, no. 1, p. 390, Oct. 2025, doi: . DOI: 10.1007/s44217-025-00814-x

- M. Lyons, E. Deitrick, and J. Ball, “Characterizing computing students’ use of generative AI,” presented at the ASEE Annual Conference & Exposition, 2023.

- H. Johnston, M. Eaton, I. Henry, E.-M. Deeley, and B. N. Parsons, “Discovering how students use generative artificial intelligence tools for academic writing purposes,” Journal of Learning Development in Higher Education, no. 34, Feb. 2025, doi: . DOI: 10.47408/jldhe.vi34.1301

- D. Huang, Y. Huang, and J. J. Cummings, “Exploring the integration and utilisation of generative AI in formative e-assessments: A case study in higher education,” Australasian Journal of Educational Technology, vol. 40, no. 4, pp. 1–19, Sep. 2024, doi: . DOI: 10.14742/ajet.9467

- A. E. Sousa and P. Cardoso, “Use of Generative AI by Higher Education Students,” Electronics, vol. 14, no. 7, Mar. 2025, doi: . DOI: 10.3390/electronics14071258